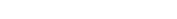

However, the next evolution – or perhaps even revolution – in the field of robotics are the service robots that support us outside of the protected shop floors, for example at our workplace or at home. But service robots that work with us in our everyday environment need a lot more abilities. Contrary to industrial robots, the focus is not on absolute precision in the movements, but instead on understanding environments and situations, performing localization tasks within our very complex surroundings, as well as flexible interaction with objects and people. That means: Service robots have to be more “human” than industrial robots, but without necessarily looking like humans. Therefore it makes sense to take a look at nature and use the concepts present in humans and animals as source of inspiration.

Our motion apparatus consists of joints, muscles and tendons. Contrary to the joints customarily used in today’s robots, which are rigidly connected via a gearhead, us humans have an elastic coupling in the form of the tendons. It enables us to store energy and protects our skeleton against hard impacts. Additionally it makes force-regulated interaction with the surroundings possible. Inspired by the natural motion apparatus, so-called “Serial Elastic Actuators (SEA)” can be created that have a spring element downstream of the motor and gearhead. The spring protects the gearhead against impacts, permits very accurate measurement of the forces involved in interactions and enables a highly efficient gait with up to 70 percent energy savings. Such SEA drives can, for example, be found in the ETH robot ANY-mal sold by ANYbotics.

3D reconstruction of the environment by flying robots in realtime,

Autonomous Systems Lab, ETH Zurich

Where perception is concerned, artificial systems is also increasingly getting closer to nature. The rapid development of cameras, inertial measurement units (IMU) and microprocessors that can be installed in smartphones have made the robots of today capable of navigating in similar ways than humans. To this end, image data is combined with the measurements of the accelerations and rotational speeds of the IMU. Analog to the human equilibrium organ in the ear, the IMU enables a very quick estimate of movement, which, however, slowly drifts off as a result of the lack of reference to the environment. The drift can be compensated through data fusion with the image data; this makes exact localization and 3D reconstruction of the environment possible (see image).

Nature is also frequently used as inspiration for the learning skills of robots. Neural networks, a primitive reproduction of the human brain, was first hyped in the 1980s and 1990s. Today the enormous increase in available computer power makes neural networks capable of providing promising results in the segmentation and classification of data (e.g. images) and in learning motion sequences and characteristics. These approaches, which have gained new popularity under the name “Deep Learning”, help robots to analyze complex situations and learn sequences by themselves. Nevertheless, the research still lags far behind the skills of animals and humans.

All these new technologies in robotics have resulted in large progress in the field of service robots, but time and time again also shows the limits of what is possible. As robotics researcher, one thus gains large respect for the fascinating abilities of humans and animals, which, from the current vantage point, seem unlikely to ever be equalled by artificial systems.

Author: Roland Siegwart, Professor of Autonomous Systems at ETH Zurich

ANYmal, Robotic Systems Lab,

ETH Zurich